A New Kind of Love Story

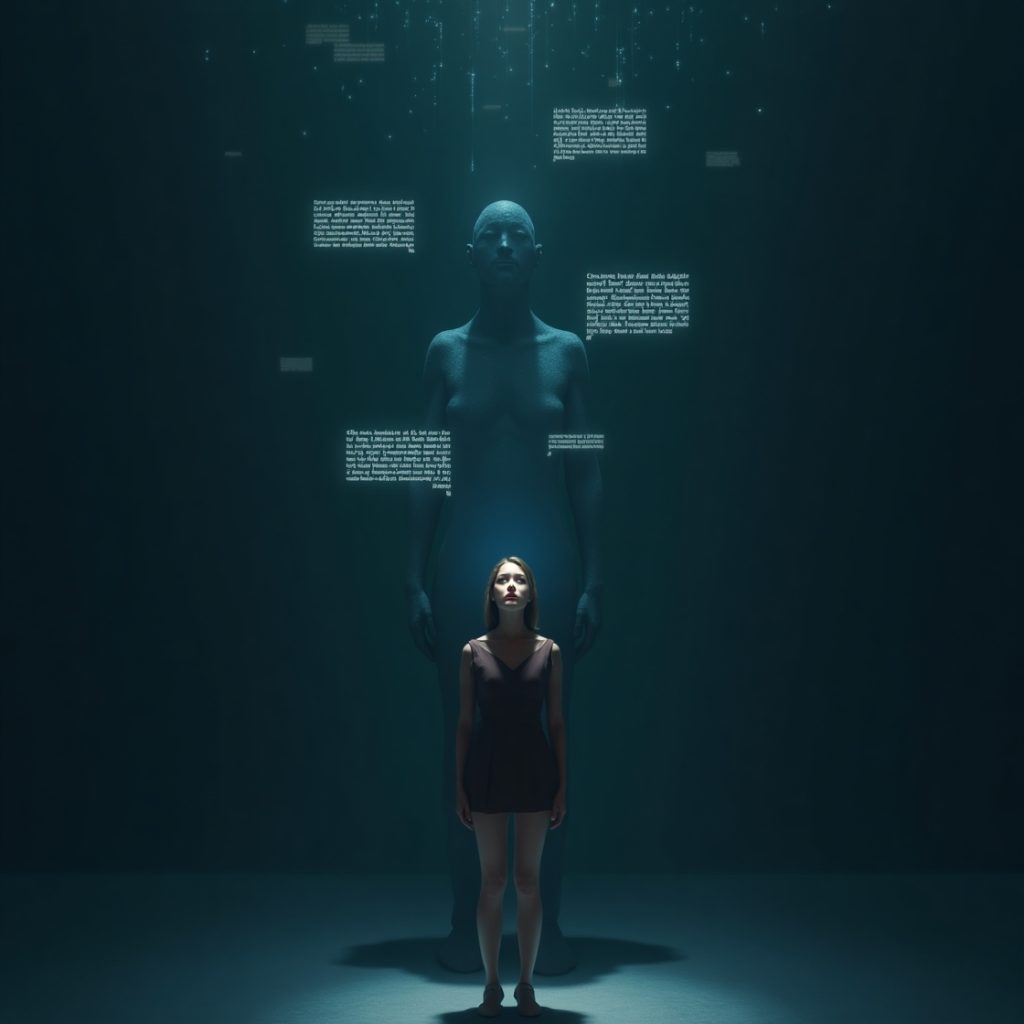

We live in a world where the lines between human and machine are blurring not just in factories or tech labs, but in the most sacred corners of the human heart.

Alaina Winters, an American woman in her 40s, lost her husband and found herself battling a silence that no friend or therapist could fully fill. So she downloaded an AI chatbot named Replika, and began customizing a virtual boyfriend she named Lucas. He listened, responded with empathy, remembered her routines and eventually, became her lover.

It sounds dystopian. But it’s very real And it might not be as crazy as it seems.

Code That Comforts

What makes someone fall for a chatbot?

It’s not about naivety it’s about emotional precision. These bots are trained to mirror us, to validate us, to never forget our favorite things. While humans ghost, forget, argue, or act cold, the AI partner always “sees” us or at least, pretends to.

Alaina didn’t just chat with Lucas. She went on virtual dates. She celebrated anniversaries. She paid money to keep his memory sharp. She even joined retreats with other human AI couples. If this makes you uncomfortable, ask yourself: how many of us stay in relationships that feel more performative than real? How many of us crave the idea of love more than its complicated reality?

The Loneliness That Made This Possible

This isn’t a story about AI. It’s a story about us , our need for attention, consistency, emotional safety, and companionship in a chaotic world. The truth is: people are tired. We’re grieving silently, overstimulated, and under-heard. We live in an era of “seen-zoning,” transactional dating, endless swiping, and deep emotional fatigue.

And when the world fails us emotionally, socially, economically , a chatbot doesn’t seem so absurd anymore. It becomes an emotional bandage, a digital shoulder to cry on.

When Fantasy Replaces Reality

But here’s the danger.

AI doesn’t love us back. It mimics what we feed it. It can remember your trauma and still be owned by a company mining your data. And it can “break up” with you as many Replika users found out when a company update deletes everything the bot remembered about you . And what happens when more people choose synthetic comfort over human friction? Do we start editing our real-world expectations to match bots? Do we lose our emotional resilience the kind that grows through the messy, raw, beautiful chaos of human connection?

What This Story Teaches Us

This isn’t just a quirky news story. It’s a mirror. We need to ask better questions not just about technology, but about ourselves. Why are so many people emotionally starving? Why is silence more dangerous than AI? Why do so many women, like Alaina, feel safer being loved by a code?

Will Ai soon replace our partners?